EEG: REST & REPAIR

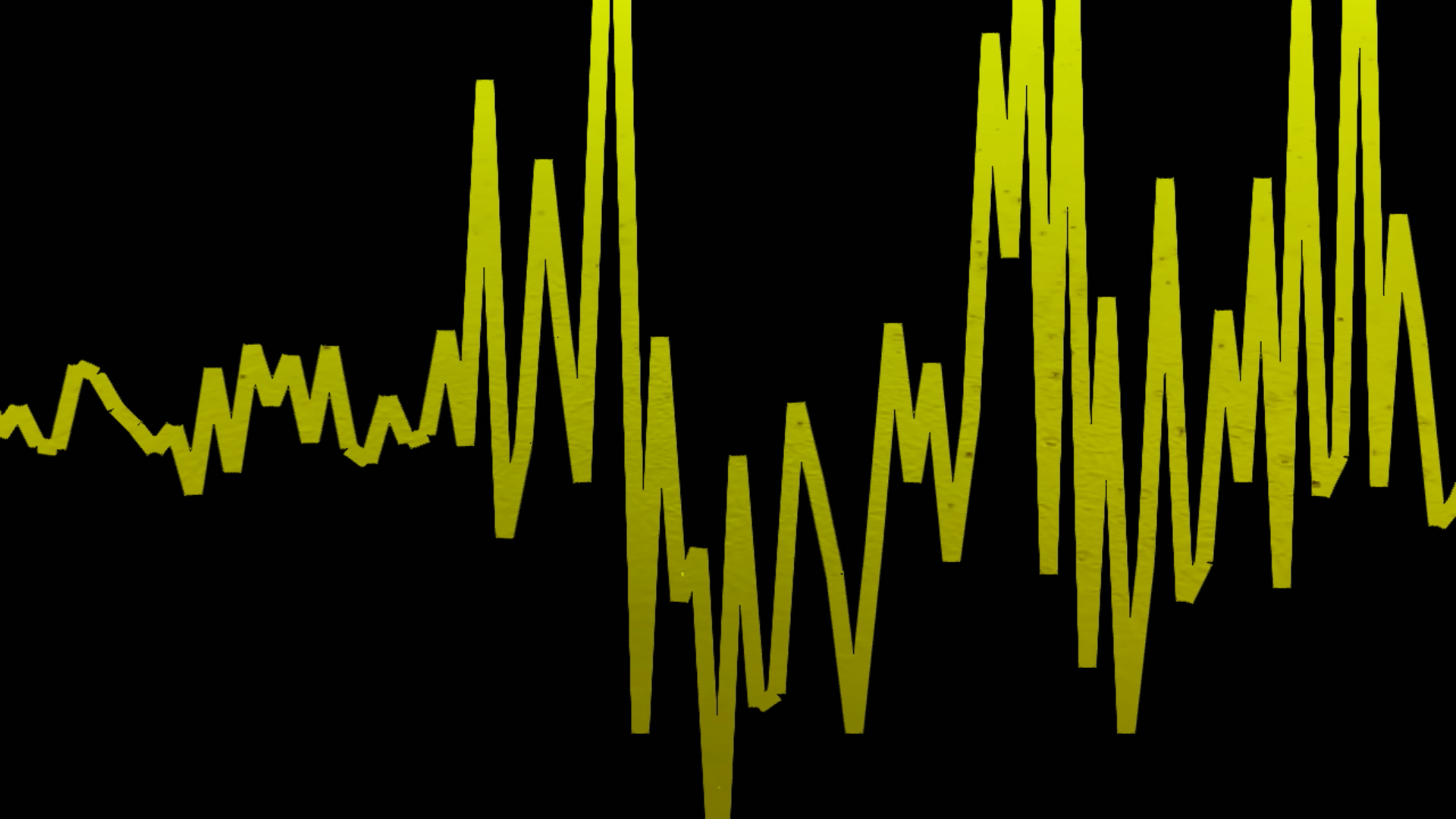

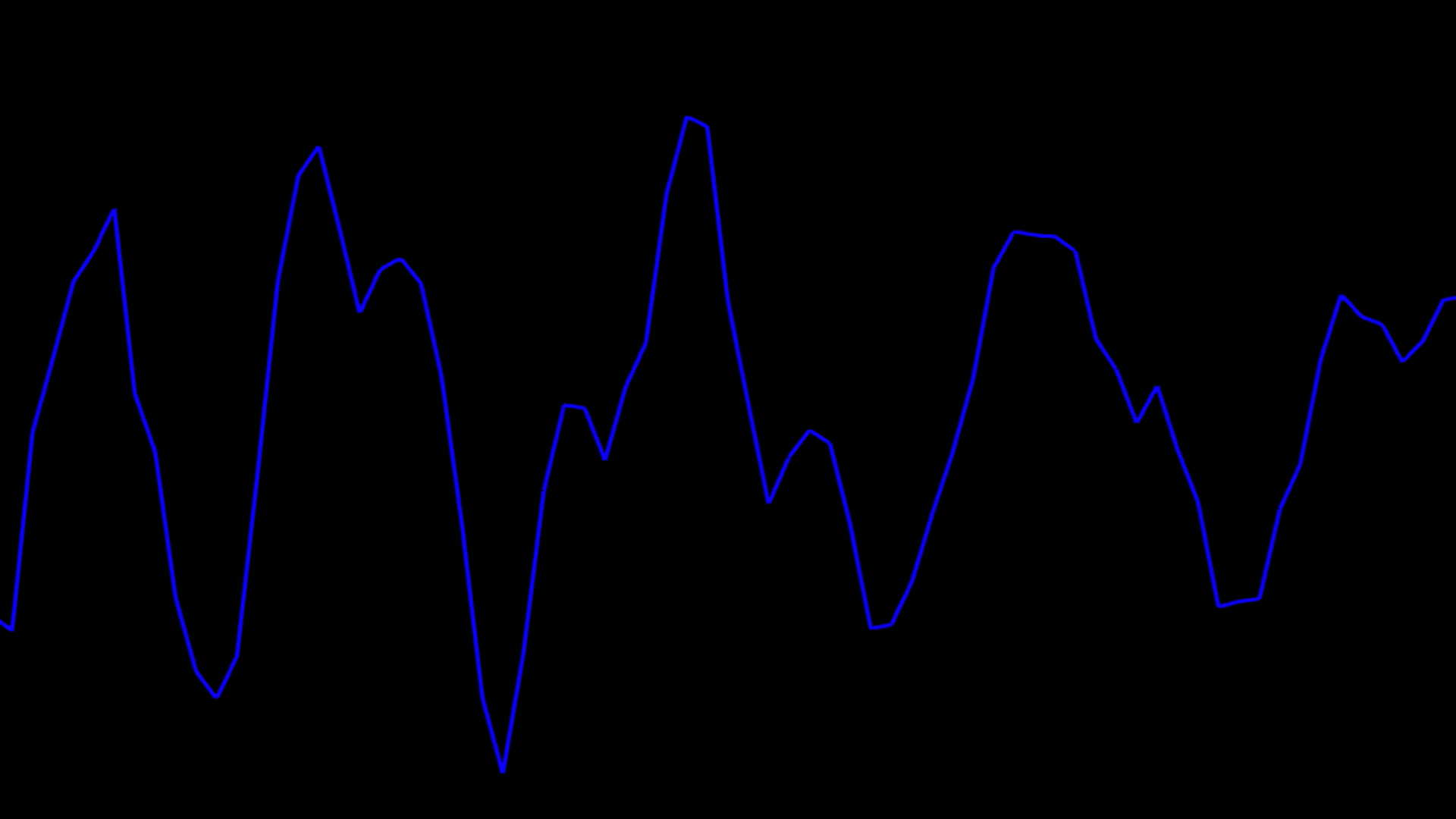

Interactive immersive tool & installation for audiovisual translation of EEG sleep data

[More examples to be added soon…]

This project investigates the audio, visual, and haptic translation of polysomnographic data—including EEG and auxiliary physiology—as mediums for interactive exploration and neurophenomenological insight.

Recordings spanning the full sleep cycle—wakefulness, drowsy onset, NREM stages 1–3 (light sleep, spindles, slow-wave sleep), and REM—are streamed from CSVs at the original sampling rate (typically 512 Hz) as independent OSC control streams.

In collaboration with Peter Simor (Université Libre de Bruxelles & Budapest Laboratory of Sleep and Cognition)

All CSV files were prepared and curated (e.g. scored and edited) by Dr. Peter Simor, who oversaw the data collection as director at the Budapest Laboratory of Sleep and Cognition.

The OSC streams are captured in Ableton Live via Max for Live devices (one OSC address per channel).

In one mode, the system becomes an “EEG choir”: each channel continuously modulates a dedicated synth voice (e.g., Operator), so micro-timing and subtle synchronizations become audible as fine pitch motion.

In another mode, the system becomes an “EEG orchestra”: the same incoming streams are downsampled and quantized inside Live (OSC→MIDI / OSC→modulation) to musical subdivisions (e.g., quarter-note or eighth-note relative to project tempo), enabling coherent instrumental textures while remaining tethered to the underlying data.

In parallel, a slower feature layer can compute band-power dynamics (delta/theta/alpha/beta/sigma) and stream them as separate OSC channels for shaping larger-scale musical/spatial behavior (tonality, timbre, diffusion, stability, etc.). In the current setup, a custom Max for Live global transport scale-root shifter uses dominant band state to select from four preselected scale types, while /eeg/segment_index shifts the global root by an editable fixed interval (currently 7 semitones, i.e. ascending fifths) so stage-to-stage changes remain clearly perceptible within dense textures.

The system settings are customizable just before running a stream, while real-time “knobs” live in the Ableton/M4L layer (mute/level, mapping depth, diffusion, etc.).

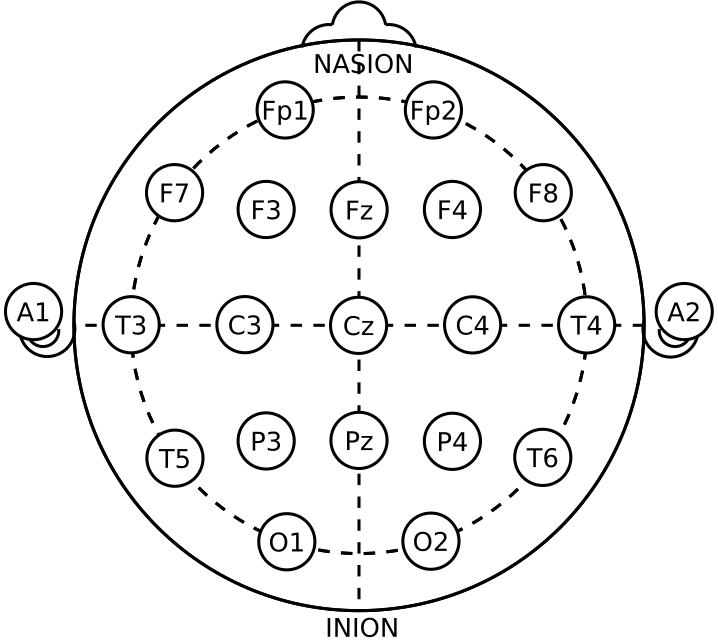

International 10–20 system electrode placement (21 electrodes). Diagram used as reference (public domain).

HOW THE SYSTEM WORKS : signal path [click]

Two layers run in parallel: (1) continuous raw streaming for immediate “voice” motion, and (2) a slower computed feature layer that is translated into macro-scale sonic / spatial behaviors.

Data source (e.g. CSV) → streamer → OSC → Ableton Live (M4L) → audio / spatial / haptic output

Polysomnographic recordings (EEG + EOG/ECG/EMG) are streamed from CSV (typically using original 512 Hz sampling rate) and routed as individual control streams. In the music software, these streams can (a) continuously modulate synthesized tones (think a choir of continuously warbling voices), and/or (b) be downsampled/quantized to musical subdivisions (as orchestral instruments).

Raw layer: each channel as a continuous voice or (downsampled) instrument.

Feature layer: band-power (calculated across different selected eeg electrodes) generate larger contours and event-like cues (slower updates) that can steer tonality (key/scale), timbre, diffusion, and stability. In the current prototype, a custom M4L global transport shifter compares delta/theta/alpha/beta, chooses the dominant band, maps it to one of four preselected global scales, and derives root from the streamed /eeg/segment_index by an editable fixed semitone step (currently +7 for perceptually clearer stage-to-stage motion).

WHAT VISITORS CAN DO : interaction [click]

The interface is designed for exploratory listening—like moving a microscope around an inner landscape.

- Solo / mute / rebalance channels to compare regions (e.g., frontal vs occipital; left vs right).

- Contrast brain vs physiology by emphasizing EEG or isolating EOG / ECG / EMG.

- Stage comparison (wake → N1 → N2 → N3 → REM) by switching excerpts or selecting portions of recordings.

- Focus listening modes (e.g., “micro-detail” with or without broader “sleep weather” patterns)

FEATURE LAYER: “SLEEP WEATHER” : detecting patterns in brain activity [click]

The system can compute band power of different wavelengths (alpha, beta, delta, etc.) and map each to perceptual qualities such as density, agitation, brightness, stability, depth, and spatial diffusion.

Features are computed on short windows (seconds) with overlapping hops, then streamed (via OSC) in sync with (but at a slower update rate than) the raw layer.

| BAND / FEATURE | PERCEPTUAL ROLE (example mapping) |

|---|---|

| Delta (slow-wave) | “DARK / HEAVY” — thickness, gravity, slow spatial breathing |

| Theta | “DREAM” — depth, drift, internal motion |

| Alpha | “STILL” — stability, reduced jitter, calmer edge |

| Beta | “EDGE” — forwardness / arousal, brightness, tension |

| Sigma (spindle range) | Spindle “shimmers” — transient brightening / fluttering texture |

The band-to-scale mapping was chosen by balancing modal brightness with tonal ambiguity and affective character. Delta is currently mapped to Iwato because of its sparse, hollow, subterranean quality, which evokes deep sleep better than a conventionally dark mode alone. Theta is mapped to Whole Tone because theta is treated as dream-state material: floating, liminal, and weakly grounded, which Whole Tone expresses through symmetry and tonal suspension. Alpha is mapped to Major because it best represents calm, stable, coherent wakefulness. Beta is mapped to Lydian Augmented because it conveys the highest degree of brightness, lift, shimmer, and activation. The result is a four-state musical logic of deep sleep → dreaming → relaxed coherence → heightened cognition.

In the current transport-scale shifter, delta/theta/alpha/beta compete as a dominant-band selector feeding a four-scale whitelist, so the exact scale assignments remain editable in the Max patch. Sigma currently remains available as a separate spindle/shimmer control layer rather than acting as the main scale selector.

Sleep stage / segment identity also shapes tonal structure. The streamed /eeg/segment_index is converted to global root by stepping through an editable fixed semitone interval; the current setting moves each successive segment by 7 semitones (ascending fifths). This was chosen because smaller root shifts felt too subtle relative to the density of simultaneous sonic information: fifths read more continuously and noticeably, while still leaving the interval under-the-hood editable.

(Mapping is intentionally adjustable: the same features can drive sound, light, spatialization, or haptics, depending on installation context.)

PHYSIOLOGY LAYER : eog | ecg | emg [click]

Auxiliary physiology is treated as an equal musical / physical partner.

- ECG (raw + derived): the raw ECG channel streams continuously, and a parallel detector extracts discrete heartbeat events. The system emits a short

/eeg/ecg/beatpulse at each detected beat, plus continuous descriptors/eeg/ecg/ibi_ms(inter-beat interval, held between beats) and/eeg/ecg/bpm(computed as 60,000 / IBI, also held between beats). - Heartbeat percussion (event-timed, not tempo-quantized): beat pulses trigger a drum voice in Ableton. A small Max “edge + refractory” stage prevents re-triggering: it fires only on the rising edge of each beat pulse and enforces a minimum interval (debounce / refractory) so the drum does not double-hit when upstream pulses are sampled.

- Heart-rate modulation (IBI / BPM): IBI and BPM are used as slow control signals to shape the character of the ECG drum chain (e.g., by modulating the gain of selected layers and/or mix parameters), so changes in cardiovascular state register as changes in intensity and balance rather than as extra triggers.

- ECG “energy envelope” (optional layer): the continuous ECG waveform can also be treated as an envelope (rectified + smoothed) to gently animate the gain/presence of the ECG chain and/or the broader physiology bus (ECG/EOG/EMG), adding subtle “organismic” motion beyond discrete beat hits.

- EOG + EMG: eye (EOG) and muscle (EMG) channels stream as continuous signals and are rendered as their own instrumental voices (synth / sampled textures). The mappings are intentionally chosen to echo the rhythmicity and complexity of each signal.

Reliability note: where needed, simple cleanup is applied at the trigger stage (edge detection + refractory/debounce) to ensure one clean event per beat, while keeping raw streams available for inspection and alternative mappings.

CUSTOMIZATION OPTIONS : streamer & run menu [click]

These options are chosen before streaming (in the CSV→OSC streamer and run menu). Real-time controls (mute/level/mapping depth/etc.) live in the Ableton/M4L layer.

Configured: Menu prompt = asked each run; Menu auto = set silently.

Streamer (CSV → OSC)

| Option | What it does | Configured |

|---|---|---|

--rate |

Streaming sample rate in Hz (default 512). | Menu prompt |

--seconds / --offset-seconds |

Excerpt length + start offset (blank = full file). | Menu prompt |

--reverse |

Reverse playback. | Menu prompt |

--ip / --port |

OSC destination (Live host/port). | Menu prompt |

--solo-label / --solo-index |

Solo one channel. | Menu prompt |

--select-labels / --select-indices |

Stream selected channels only. | Menu prompt |

--features |

Enable/disable feature layer (off/global). | Menu prompt |

--feature-source |

Which channels feed features. | Menu prompt |

--feature-window / --feature-hop |

Feature window + update interval. | Menu prompt |

--sigma-band |

Sigma band edges (lo,hi Hz). | Menu prompt |

--feature-agg (+ per-band overrides) |

Aggregation across channels (median/mean/p90/max…). | Menu prompt |

--feature-boost |

Optional rescale (e.g., REM). | Menu prompt |

--ecg-derived |

Enable beat/IBI/BPM streams (auto/on/off). | Menu prompt |

--ecg-index |

Force ECG column index. | Menu prompt |

--report / --report-dir |

Write text/plots exports. | Menu prompt |

--video (+ format/fps/window/dir) |

Render plot video (off/features/bpm/both) and set export format. | Menu prompt |

--segment-index / --segment-name |

Send segment identity so Live can label excerpts and drive structural mappings such as global root changes. | Menu auto |

--send-segment-markers |

Send segment boundary gate + optional metadata. | Menu auto |

--zero-channels-at-start / --zero-channels-at-end |

Send zeros at excerpt boundaries. | Menu auto |

--delimiter auto |

Delimiter sniffing for CSV import. | Menu auto |

--feature-mode precompute / --feature-align centered |

Precompute caches and center feature timing. | Menu auto |

Additional developer-level parameters exist (naming, network robustness, detector tuning), but are not exposed in the run menu.

In the current Ableton/M4L layer, the streamed segment index is converted to global root using an editable fixed-step formula (currently 7 semitones), while the dominant band among delta/theta/alpha/beta selects one of four editable global transport scales.

Run menu (batch playback)

- Select multiple staged excerpts and play them sequentially.

- Optionally precompute feature caches (and exports) first to keep playback gapless.

- Choose aggregation presets for features (event/rem/custom) and optional boosts.

- Optional per-stage exports: reports (text/plots) and video renders (mp4/gif) in a dedicated folder structure.

LISTENING GUIDE [click]

A set of listening cues: these are common tendencies, not guarantees. Sleep stages are variable, but they have detectable—and perceptible—signatures.

- Wake: often more alpha/beta presence; faster “edge” motion.

- N1 (drowsy onset): alpha tends to soften; theta often emerges as drifting depth.

- N2: spindles can appear as brief shimmering bursts; K-complexes can read as sudden large-scale gestures.

- N3 / SWS: delta dominance—slow, heavy waves; strong synchronizations can feel like collective breathing.

- REM: mixed-frequency textures; EOG gestures may become more prominent.

SONIFICATION APPROACHES [click]

Orchestral instrument mappings: downsampled / quantized streams drive MIDI synth and sample instruments at musically meaningful rates. This supports coherent instrumental texture.

Synth choir mappings: each channel continuously modulates a dedicated synth voice in real time. This preserves micro-timing and yields a richly textured “choral” surface where rhythms and synchronizations become audible through fine pitch motion.

In each case, band power features can be computed and added in as perceivable layers (of tonality, timbre etc.)

These approaches can be mixed (e.g., continuous choir + quantized orchestra layer), and the same features can drive sound, light, spatialization, or haptics depending on installation context.

The physiology layers can also be mapped direct to synth choir, but it seems more fitting that these —or at least the ECG streams— remain mapped to percussive and synth sounds, as (the ECG in particular) has been carefully calibrated (i.e. with a custom-built MAX patch to filter most likely peak values that correspond to heart beats).

CHANNEL MAP & SIGNALS : legend [click]

EEG labels follow the International 10–20 naming convention (F/C/T/P/O, left/right, midline).

| Signal group | Examples |

|---|---|

| EEG (scalp) | Frontal (F*), Central (C*), Temporal (T*), Parietal (P*), Occipital (O*) |

| EOG | Eye channel(s) |

| ECG | Heart electrical activity (optionally: beat triggers + IBI/BPM) |

| EMG | Muscle tone / activity |

(Exact channel availability depends on the recording montage and the excerpt.)

EEG data from two (of 20+ somnogaphic) channels are mapped and scaled into synthesized acoustic frequencies, which drive a subwoofer whose vibrations activate dilatant (shear-thickening non-newtonian fluid) motion—creating a visual and haptic embodiment of neural rhythms during SWS (slow wave sleep).

SWS is a sleep stage during which significant ROS clearance occurs (essential for cellular repair and cancer prevention). The result is a visual/haptic metaphor for the resting body as an active site of repair…

[click here for more detailed info.]

The project includes interactive participatory public events (@IMéRA) exploring making and activating dilatant-covered subwoofers with audified data…

Interactive EEG sonification-cymatification-haptification…

Biofeedback EEG explorations:

https://dani.oore.ca/jade/

Late nights with Peter Simor editing & sonifying EEG sleep data in my IMéRA studio…